NARC — Designing trust

into AI-assisted detection.

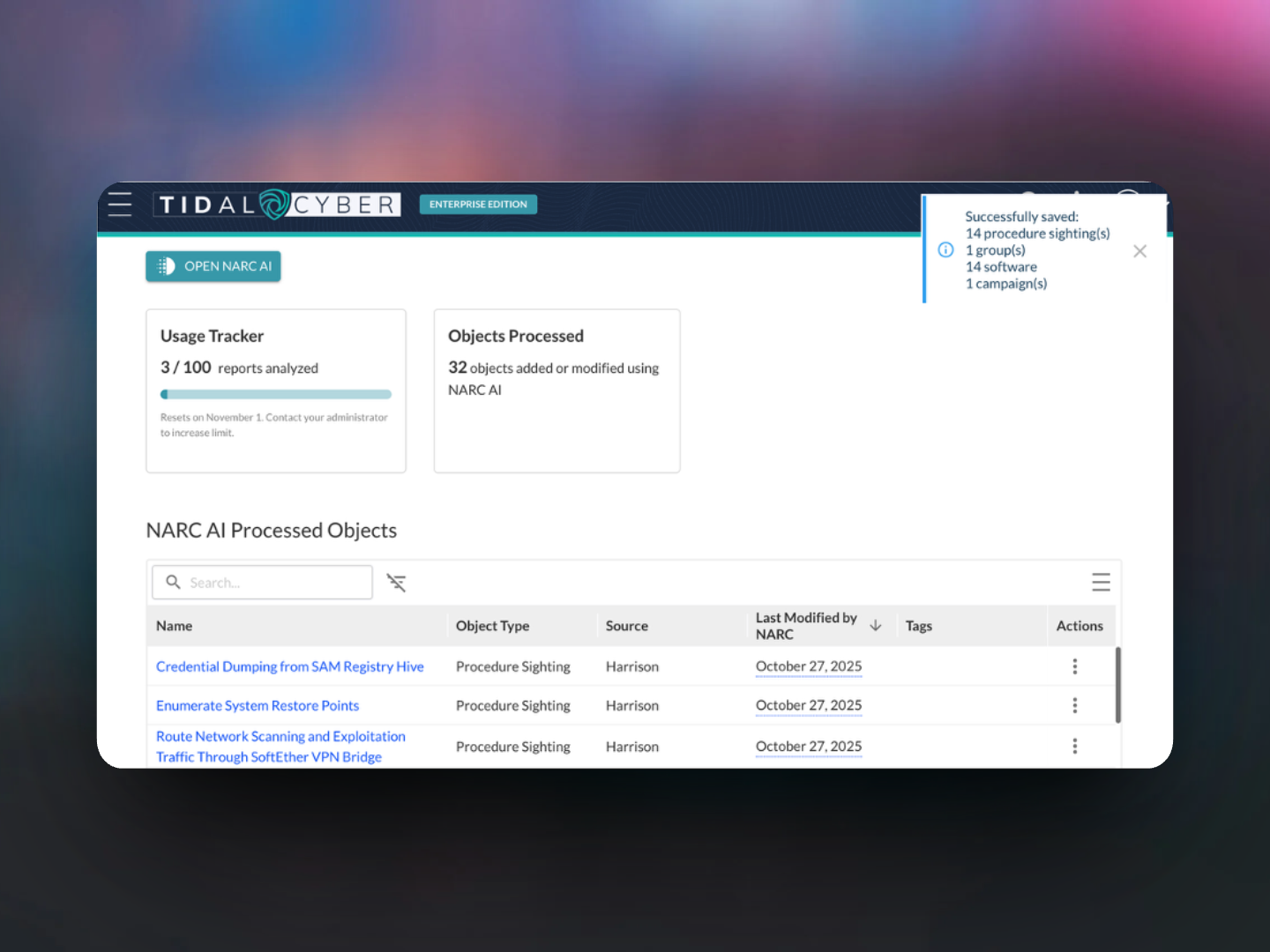

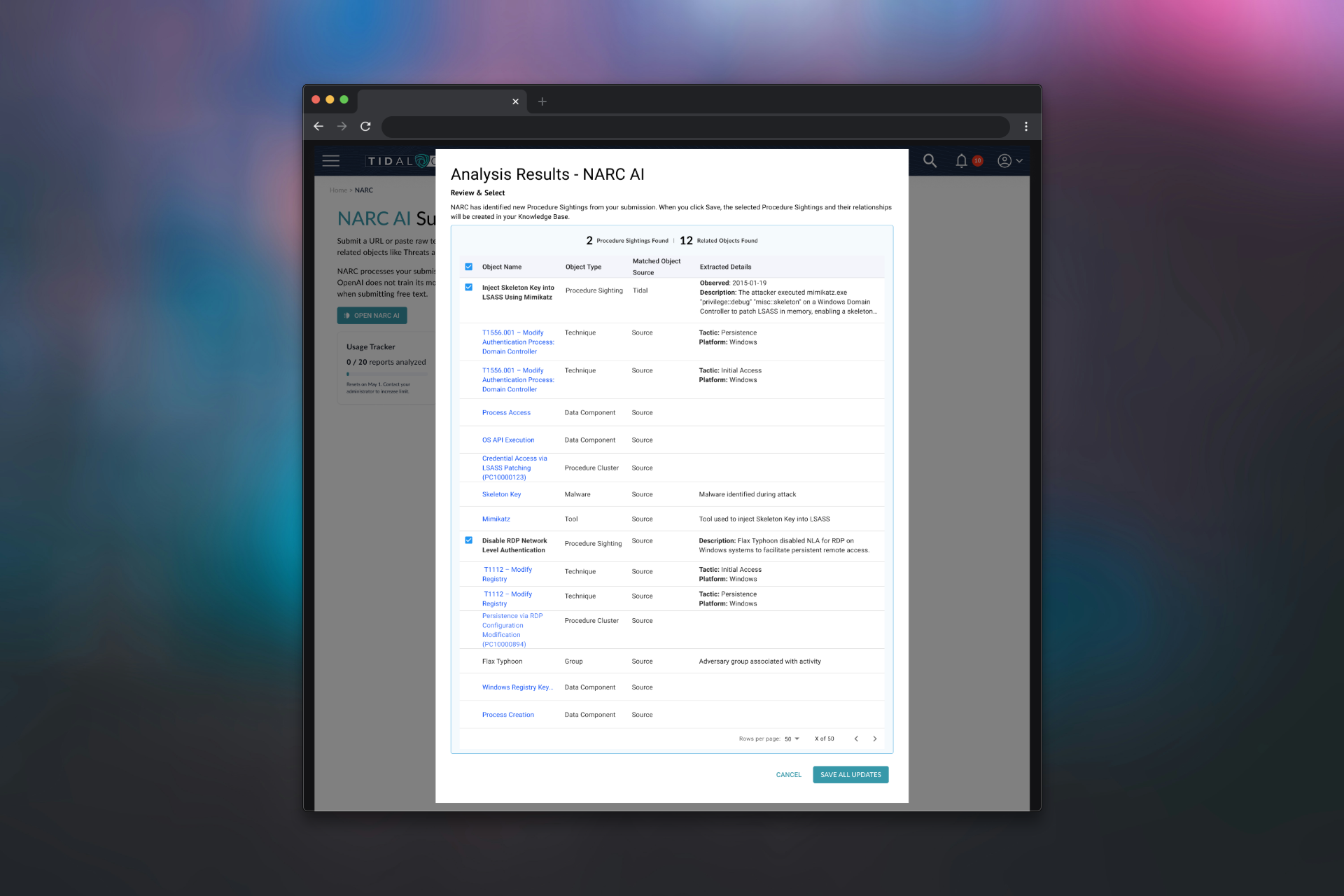

NARC used an LLM to extract intelligence from security procedures and surface findings analysts could act on. The design challenge was making those outputs trustworthy — not just useful — for analysts making real security decisions under pressure. Across three versions, every design decision was a trust decision.

The AI worked. Getting analysts to trust it correctly was the harder problem.

Security analysts are skeptical by training. They've seen tools overpromise, produce false positives, and degrade into noise. An AI feature that surfaced findings without explaining itself — without showing its work — was going to get ignored or, worse, misused.

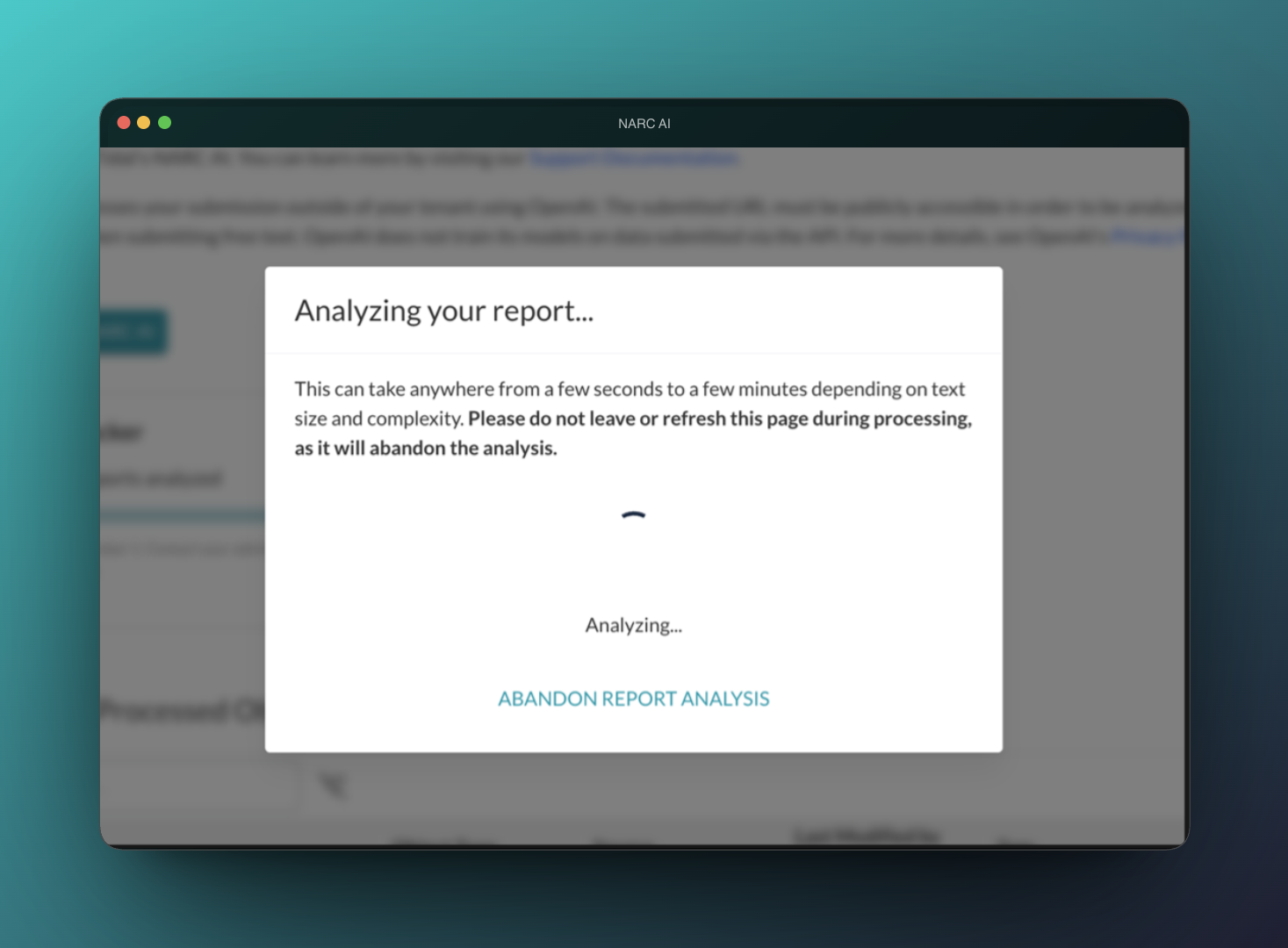

The design challenge wasn't just the happy path. It was every state in the workflow: what happens when a user doesn't have access? When the model is still processing? When results are partial? When the AI is confident but the analyst shouldn't be? Each of those states was a chance to either build or break trust — and none had been thought through.

Map every state. Treat each one as a trust decision.

I mapped the full workflow from first contact to results — access, creation, processing, output, and the edge cases at each stage. For every state: What does the user need to know here? What could they misread? What happens if they act on wrong information?

Every state annotated with what the user needs to know, what they might misread, and what the design does about it. This map drove every screen.

Access design turned out to be more important than I'd initially expected. Users with different permission levels needed to encounter the feature differently — but all needed to understand what they were and weren't seeing, and why. Getting this wrong would have meant users forming incorrect expectations about what the AI could do for them.

Each state is honest about what the user can and can't see. No simulated previews, no implied capability that doesn't exist.

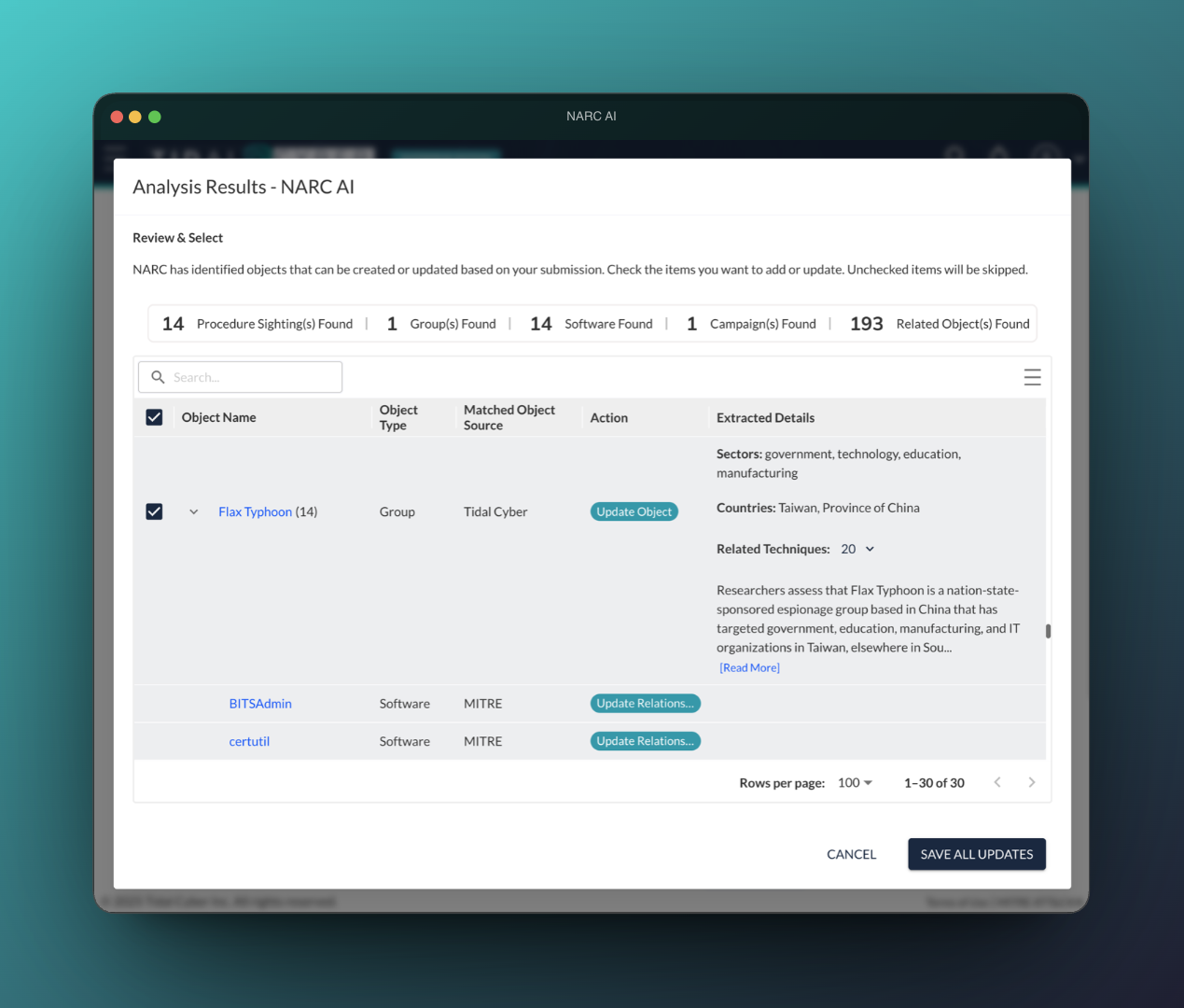

Source attribution visible in the primary results view, not hidden in a drawer. Analysts see the basis for each finding without an extra interaction.