Nefeli — dress with intention.

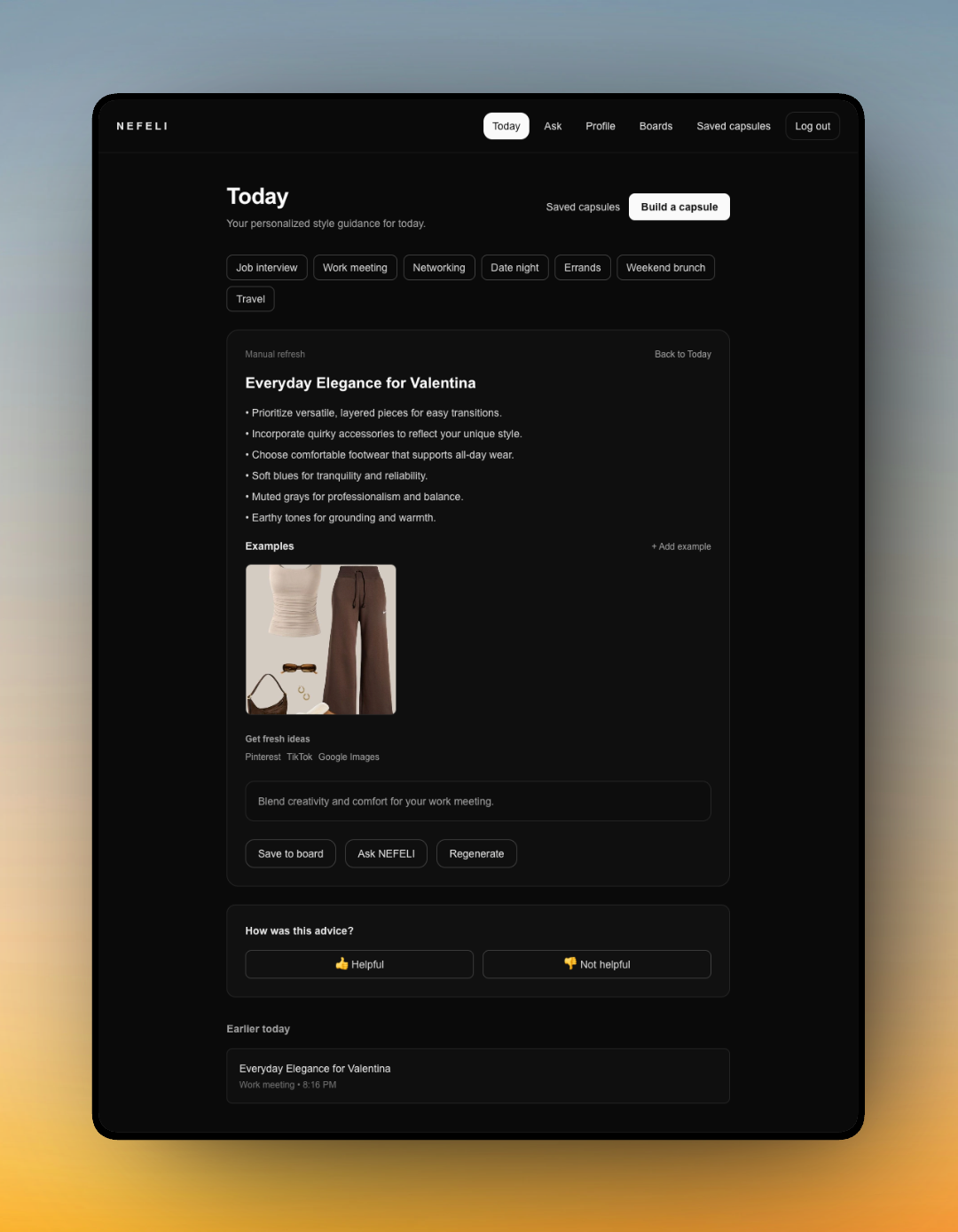

An exploratory AI outfit guidance system built around a single question: how do you make AI feel genuinely helpful — rather than authoritative — in a domain where there are no objectively correct answers?

A trust-first approach to AI guidance in a subjective domain.

Nefeli is an AI-assisted outfit guidance tool built around a single design question: how do you make AI feel helpful rather than authoritative in a domain where there are no objectively correct answers? Most AI styling tools either overwhelm users with options, issue recommendations without explanation, or require so much input that the effort outweighs the value.

Nefeli explores a different approach: calm guidance, contextual reasoning, and clear limits on what the AI claims to know.

The trust problems in Nefeli are the same ones I work on in NARC — just at lower stakes. When analysts use NARC to evaluate AI-generated security findings, the same questions apply: Does the interface communicate the basis for the recommendation? Does it make the AI's limitations visible? Does it keep the human in control of interpretation?

Building Nefeli gave me a lower-stakes environment to prototype trust design patterns — provenance, explanation, constraint — that I then brought to production AI work. The domain doesn't matter; the design problems do.